NVIDIA open models provide the foundation to innovate across agentic AI, robotics, autonomous vehicles, and research. 🤖 This release contributes 10 trillion language tokens, 500,000 robotics trajectories, and 100 terabytes of vehicle sensor data to the community. These frameworks are designed to significantly accelerate specialized AI development and discovery. Learn more: https://t.co/ihG2XJHkMI

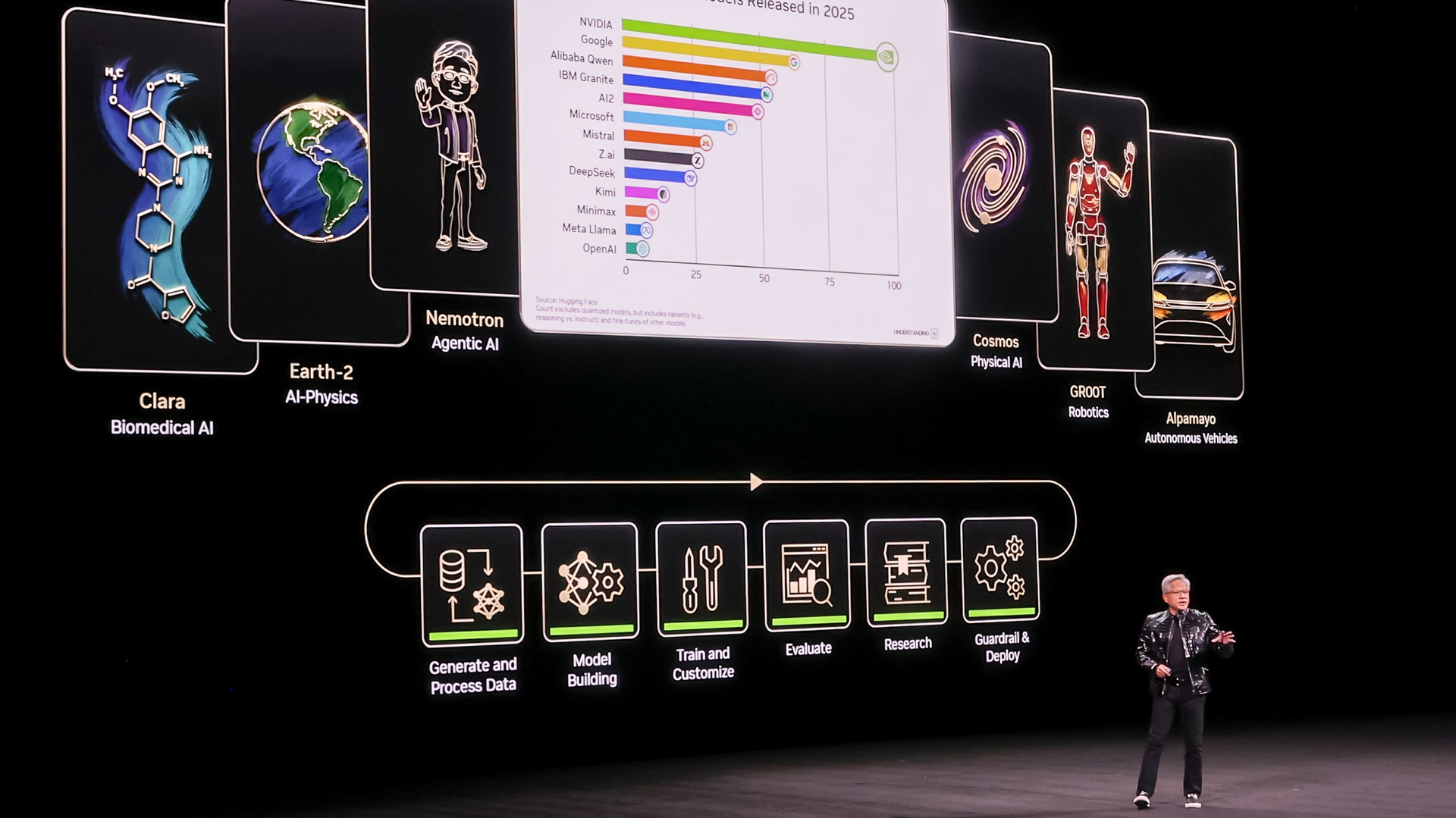

Keynote slide/infographic showing NVIDIA’s Open Model Ecosystem across domains—Clara (biomedical research), Earth‑2 (AI‑physics), Nemotron (agentic AI), Cosmos (physical AI), GR00T (robotics), and Alpamayo (autonomous vehicles). It visually supports the tweet’s point that NVIDIA’s open models provide a common foundation spanning agentic AI, robotics, AV, and research.

Source: NVIDIA Newsroom

Research Brief

What our analysis found

NVIDIA has launched what it describes as one of the world's largest collections of open multimodal data, contributing 10 trillion language training tokens, 500,000 robotics trajectories, 455,000 protein structures, and 100 terabytes of vehicle sensor data to the AI development community. The announcement, made during CES week in January 2026 and reinforced at GTC 2026 in March, positions NVIDIA as a major provider of open foundation resources spanning agentic AI, robotics, autonomous vehicles, and scientific research. The company also formed a Nemotron Coalition with eight research labs to co-develop open frontier models.

The datasets and models are substantial in scope. NVIDIA's autonomous vehicle dataset on Hugging Face includes 1,727 hours of synchronized multi-sensor driving data collected across 25 countries, while its robotics data encompasses 57 million grasps and 15 terabytes of multimodal information for its GR00T physical AI platform. Nemotron model documentation confirms training corpora exceeding 10.6 trillion tokens, of which 3.53 trillion are synthetic. These resources feed into model families including Cosmos for world models, Isaac GR00T for robotics, and Alpamayo for autonomous vehicles.

However, the meaning of "open" in NVIDIA's release warrants scrutiny. Several of the datasets and models are distributed under proprietary NVIDIA licenses rather than standard open-source terms. The autonomous vehicle dataset, for instance, restricts usage to internal development using NVIDIA technology, while some AV models carry non-commercial licenses. Industry analysts have noted that many companies' "open" AI releases are more accurately described as "open-weights," lacking full transparency around training data provenance — a distinction that is particularly relevant given ongoing litigation alleging NVIDIA used copyrighted material in its language model training.

Fact Check

Evidence from both sides

Supporting Evidence

Official blog confirms all headline figures

NVIDIA's January 2026 blog post explicitly states contributions of 10 trillion language training tokens, 500,000 robotics trajectories, 455,000 protein structures, and 100 terabytes of vehicle sensor data, directly matching the tweet's claims (blogs.nvidia.com).

Model documentation corroborates token counts

Nemotron model cards on NVIDIA's build platform repeatedly state training data exceeding 10 trillion tokens, with the Nano-30B A3B card specifying 10.6 trillion tokens including 3.534 trillion synthetic tokens (build.nvidia.com).

Hugging Face dataset pages verify AV data specifications

The PhysicalAI-Autonomous-Vehicles dataset on Hugging Face confirms approximately 100 TB of data comprising 1,727 hours of multi-sensor driving data from 25 countries, with license and terms publicly posted (huggingface.co).

Robotics trajectory count confirmed independently

A Hugging Face blog post titled "How NVIDIA Builds Open Data for AI" confirms the availability of 500,000+ robotics trajectories along with 57 million grasps and 15 TB of multimodal data for the GR00T physical AI initiative (huggingface.co).

Open-source pipelines and tooling released publicly

NVIDIA has open-sourced synthetic driving data generation tools including the Cosmos-Drive-Dreams project with associated datasets and model weights available on GitHub and arXiv (arxiv.org).

GTC 2026 announcements reinforce open model strategy

At GTC 2026, NVIDIA announced new open models for agentic AI, robotics, and autonomous vehicles, and established the Nemotron Coalition with eight labs to co-develop open frontier models (nvidia.com).

Contradicting Evidence

"Open" does not mean open source by standard definitions

NVIDIA's Open Model License and Nemotron Open Model License include proprietary conditions such as "Trustworthy AI" obligations and are not OSI-approved open-source licenses, meaning the term "open" is used more loosely than many in the community would expect (nvidia.com).

Autonomous vehicle data carries restrictive usage terms

The PhysicalAI-Autonomous-Vehicles dataset on Hugging Face limits usage to "solely for your internal development using NVIDIA technology," which is significantly more restrictive than standard open data licenses and effectively ties users to NVIDIA's ecosystem (huggingface.co).

Some AV models are non-commercial only

Certain released models such as Alpamayo-R1-10B carry non-commercial license terms, limiting their usefulness for the broader commercial developer community despite being described as open contributions (huggingface.co).

Industry-wide skepticism about "open" AI claims

Analyses from outlets including IEEE Spectrum have noted that many AI companies' "open" releases are more accurately characterized as open-weights, lacking fully open training data, complete reproducibility, or standard open-source licensing — context that applies to NVIDIA's claims as well (spectrum.ieee.org).

Ongoing litigation raises training data provenance concerns

Novelists and authors have filed lawsuits alleging NVIDIA used copyrighted materials from shadow libraries to train its language models, complicating the narrative of transparent and community-oriented open data contributions (arstechnica.com).

Report an Issue

Found something wrong with this article? Let us know and we'll look into it.